Singular Value Decomposition (SVD) is definitely one of the most important tools in Linear Algebra – and it can be tricky to understand. In this log, I will be deriving SVD from scratch with linear operators and reviewing its computation and applications.

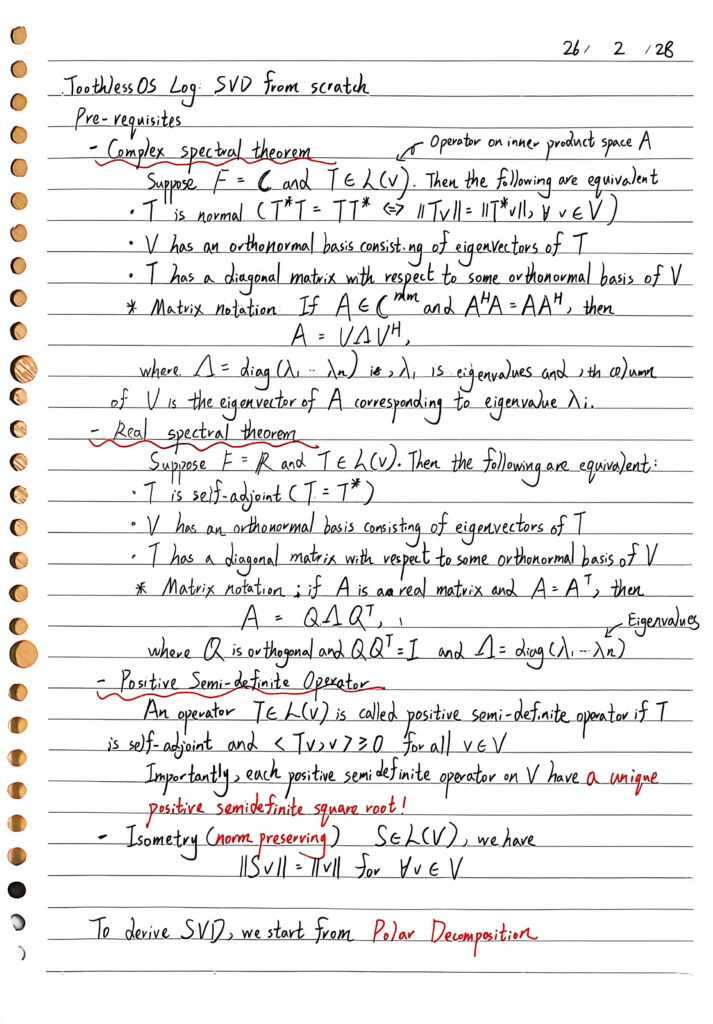

Prerequisites

Key concepts and tools:

Spectral theorem, Positive Semi-definite Operator, Self-adjoint, Normal & Isometry

If you are not familiar with these concepts, the book Linear Algebra Done Right is a great reference.

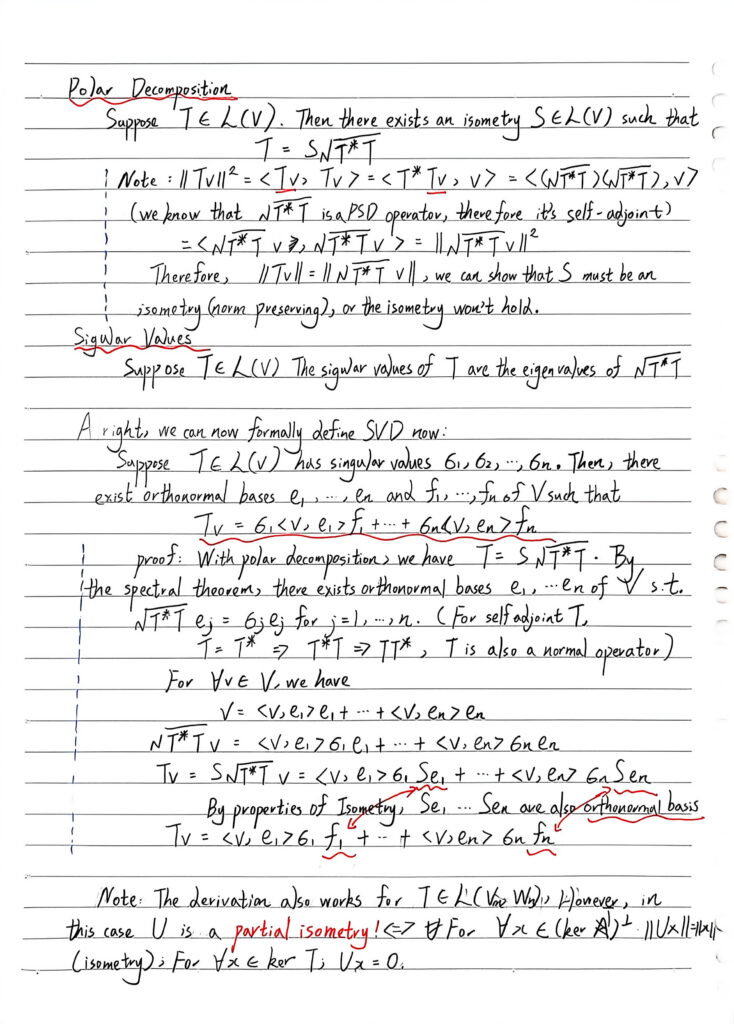

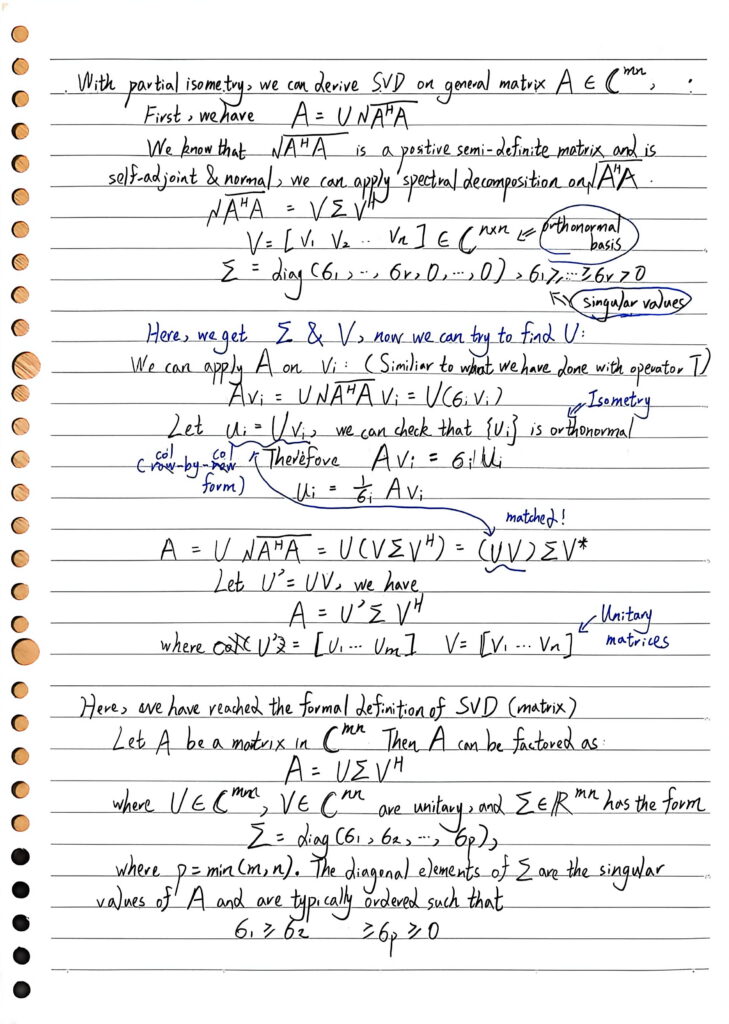

Derivation

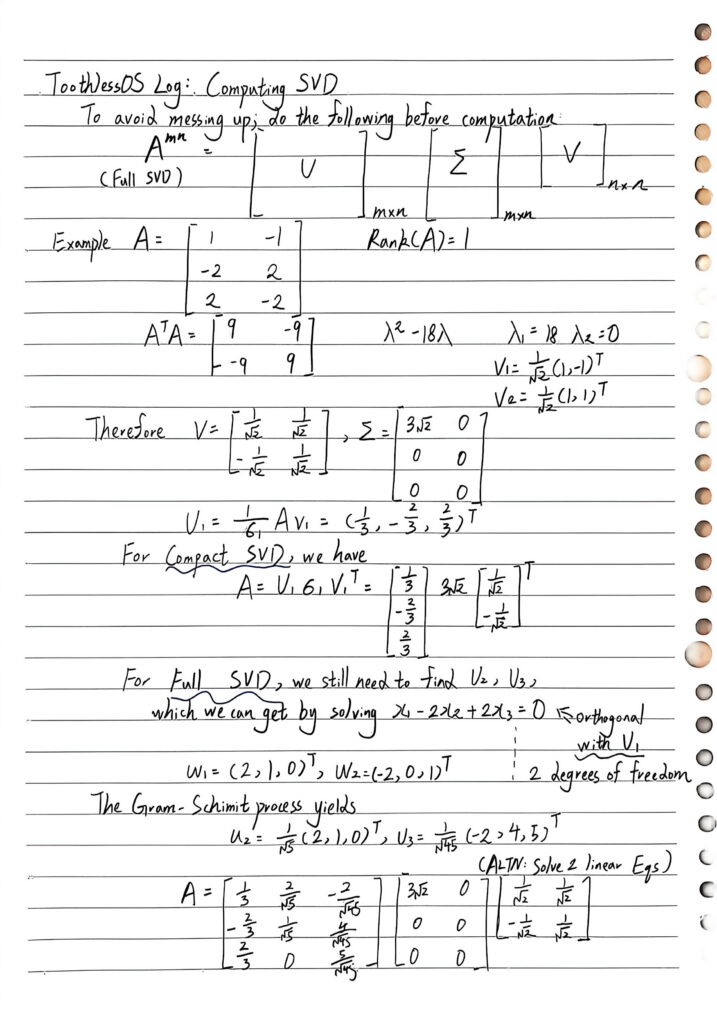

Computation

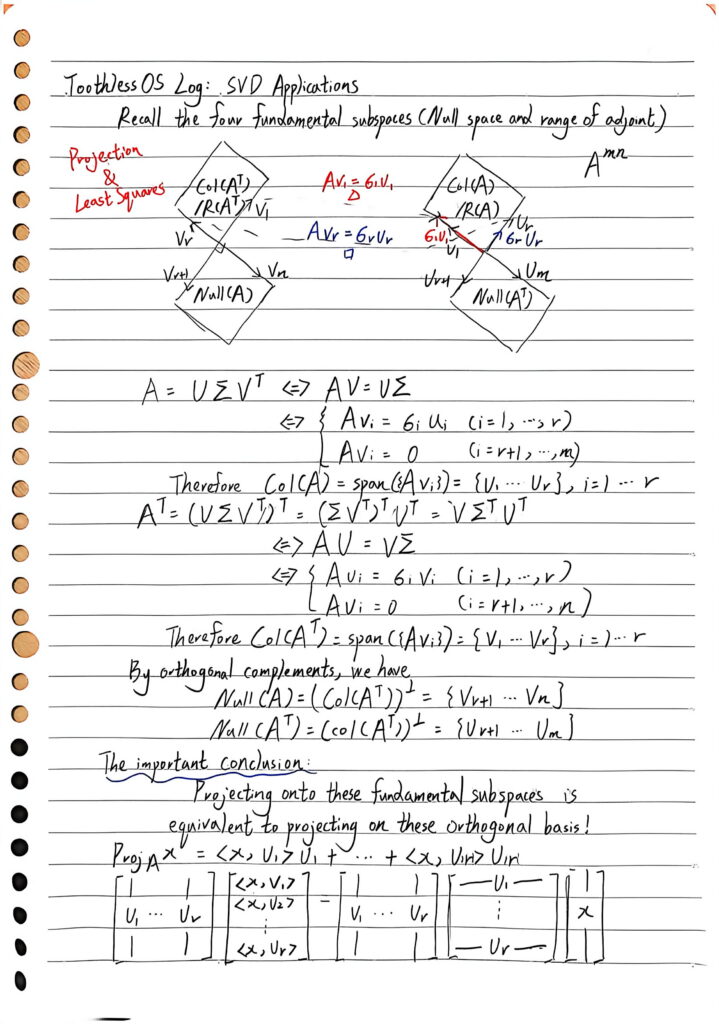

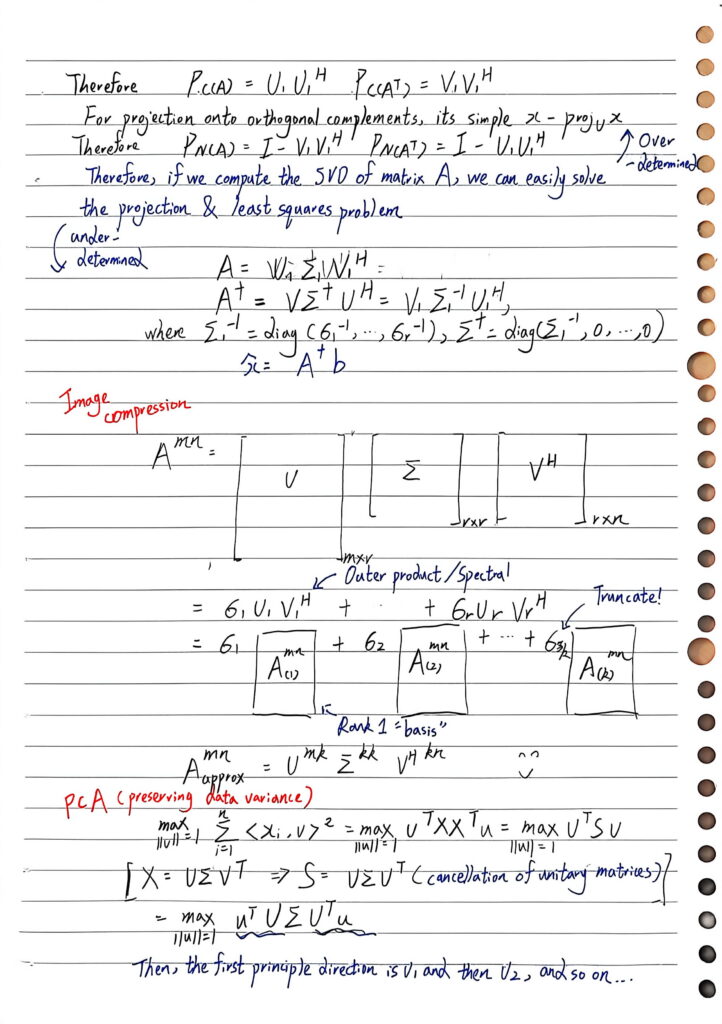

Applications

Key points: Projection, Least Squares, Image Compression & PCA

I’d like to elaborate a bit more on the image compression section here, which gives some important insights on matrix multiplication. Recall when you were learning linear algebra for the first time – matrix multiplication is just “row-column” inner product and “filling in the blanks”. However, SVD gives us a different way to think about it – taking the outer product instead of the inner product and factoring the matrix into the weighted sum of rank-1 approximations. That’s exactly how to compress the image – truncate at the top-k singular values!

You will probably see more of these kind of decompositions if you go on to learn courses such as graph-matrix analysis. Believe me, this can be very intriguing!

发表回复